Introducing Pomerium Zero

For teams that prefer a hosted solution while keeping data governance.

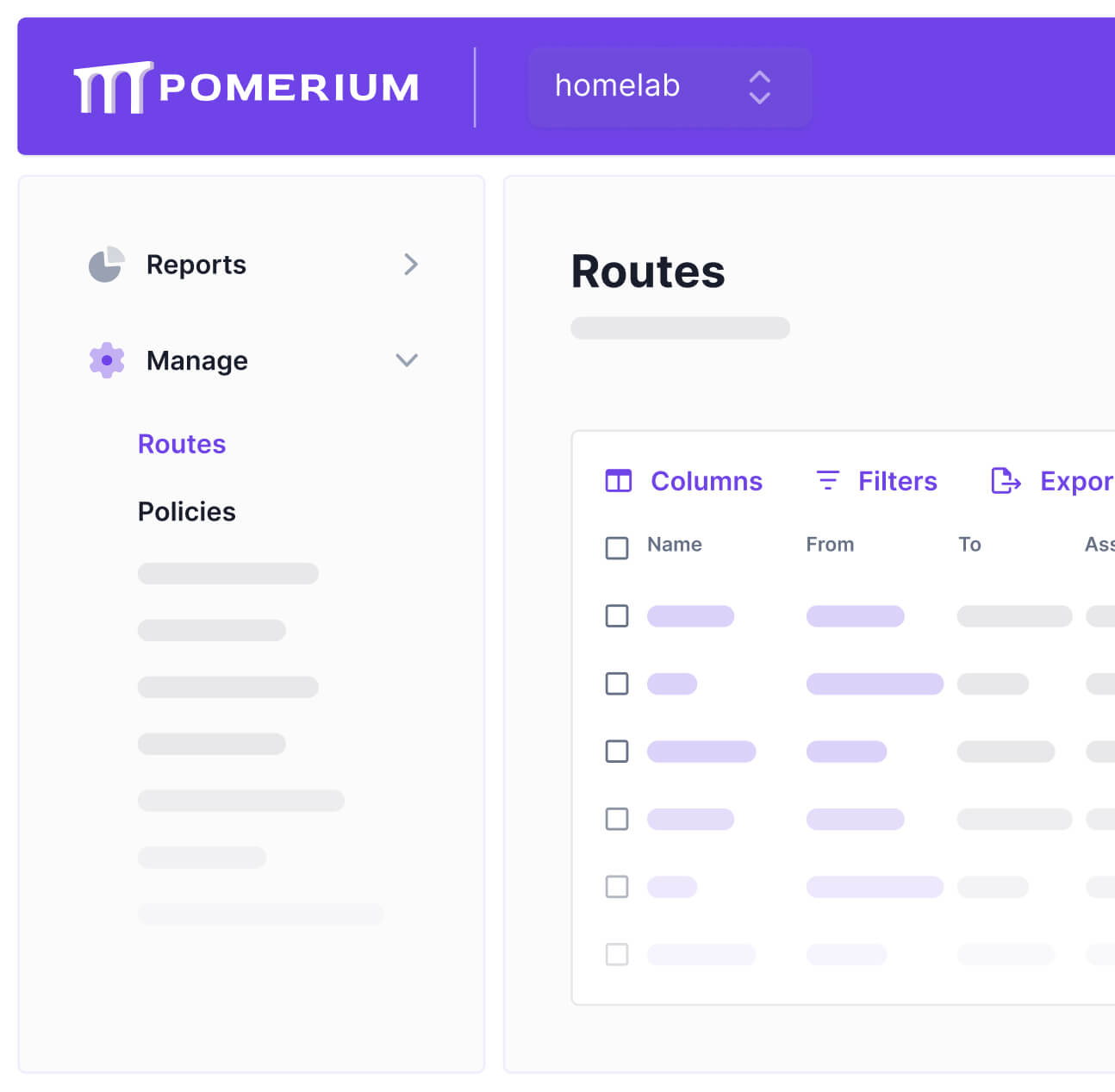

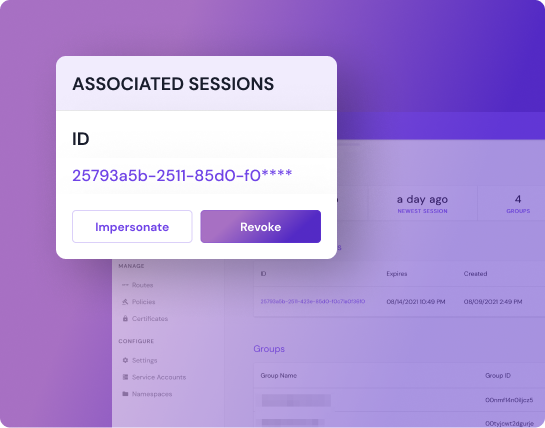

Easily manage your deployments and users

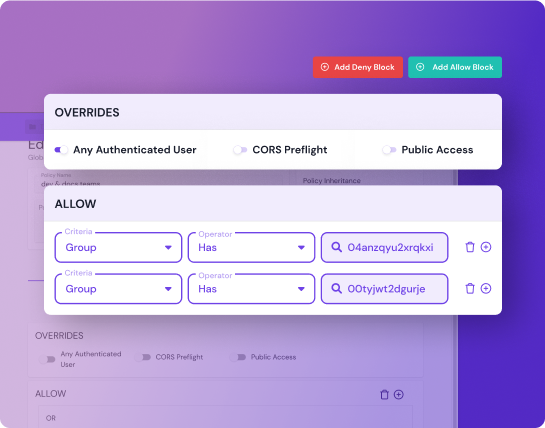

Manage users, routes, and policies with ease in Zero’s slick web-based UI.

Intuitive API

Add access control the same way you ship code.

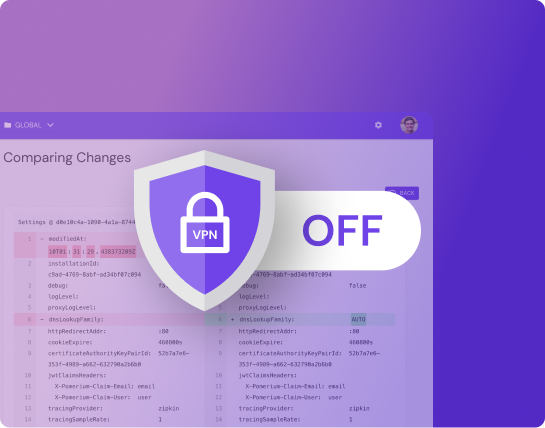

Your data stays private

You host the proxy, so we can’t see anything you don’t want us to.

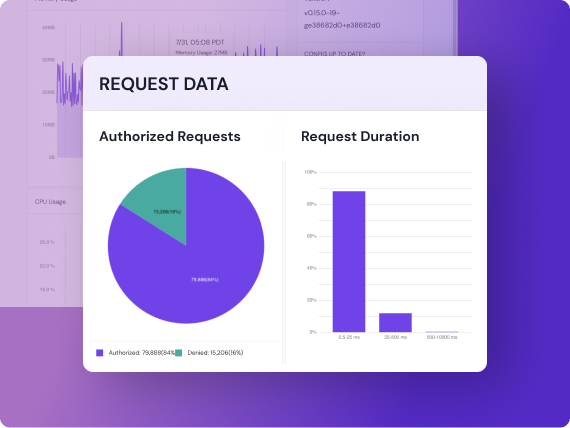

Let your users take the direct flight

No extra hops means every user’s request is faster and also cheaper because there are no ingress or egress costs.

Administer at scale

Native multi-tenancy and clustering supports even the largest and most heterogeneous infrastructure environments.